Enterprise Client

Strategic Intelligence

2025

How do you transform slow, multi-team strategic work into a fast, reliable, and repeatable process—without losing depth, context, or control over sensitive data? The client’s analysts were scattered across tools and departments, facing delays, duplication, and fragmented knowledge at every step.

We built an AI-powered strategy platform that brings research, analysis, and preparation into one seamless workflow. With a dual-pane interface, multimodal RAG, and built-in access controls, one analyst can now deliver high-quality strategic output—faster, fully cited, and audit-ready.

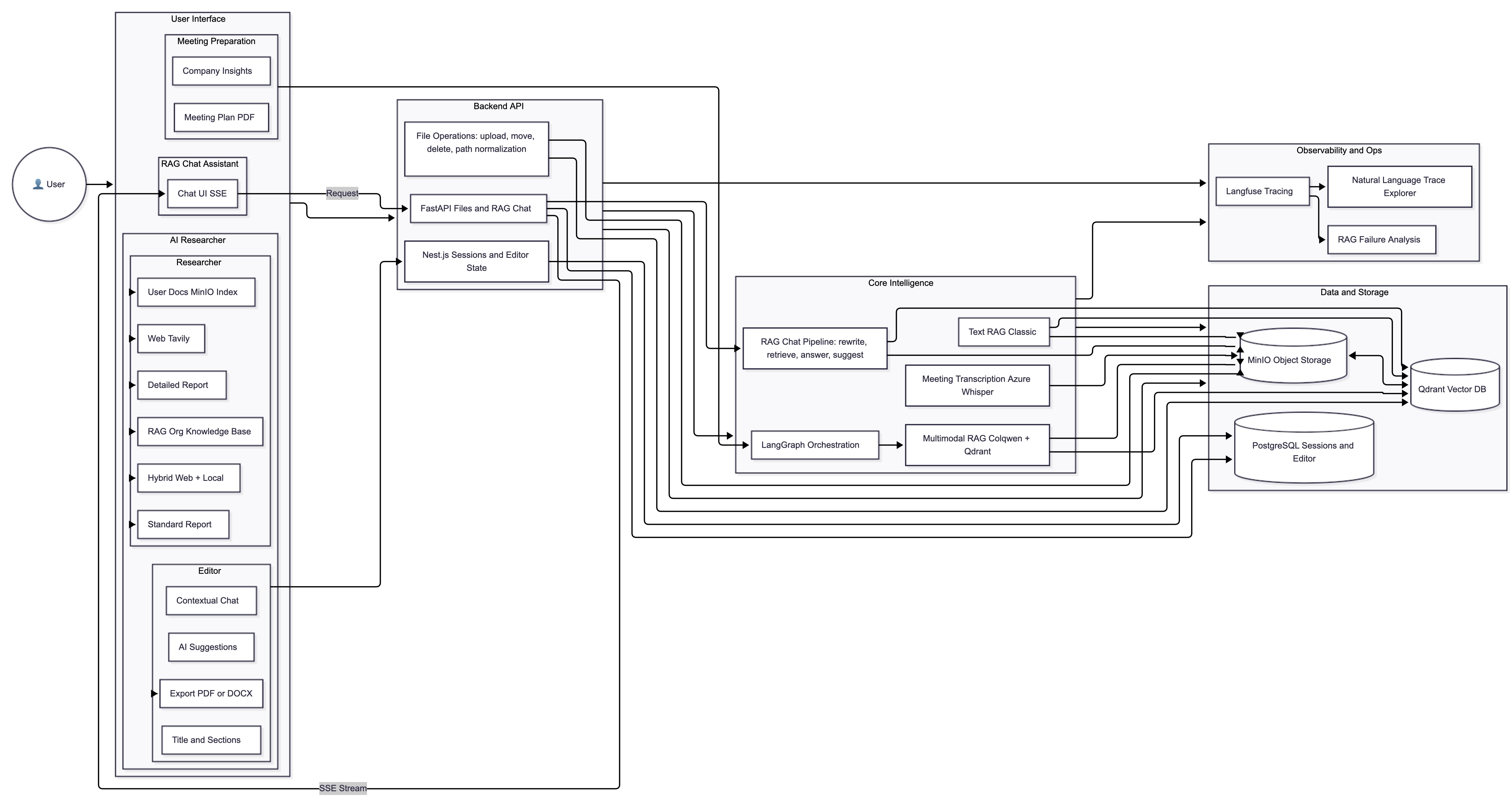

We engineered an AI-first platform that unifies fragmented strategic workflows around advanced orchestration, retrieval, and ML services. At its core, dedicated Python/FastAPI services power document embedding, transcription, and data analysis, while a flexible polyglot microservices layer—using Node.js/TypeScript for high-performance API orchestration and session management—ties everything into a single, unified capability.

The modular design supports future integration of both internal (enterprise databases, collaboration tools) and external (industry APIs, real-time data feeds) data sources to continuously enrich the proprietary knowledge core.

The platform delivers this capability through integrated components:

The core architecture solves the stateful/stateless challenge using a single, normalized session model in PostgreSQL to track both research inputs and editor history, while Keycloak-managed permissions flow through every layer of the system.

The platform's capability is delivered through key modules, each addressing a specific workflow challenge.

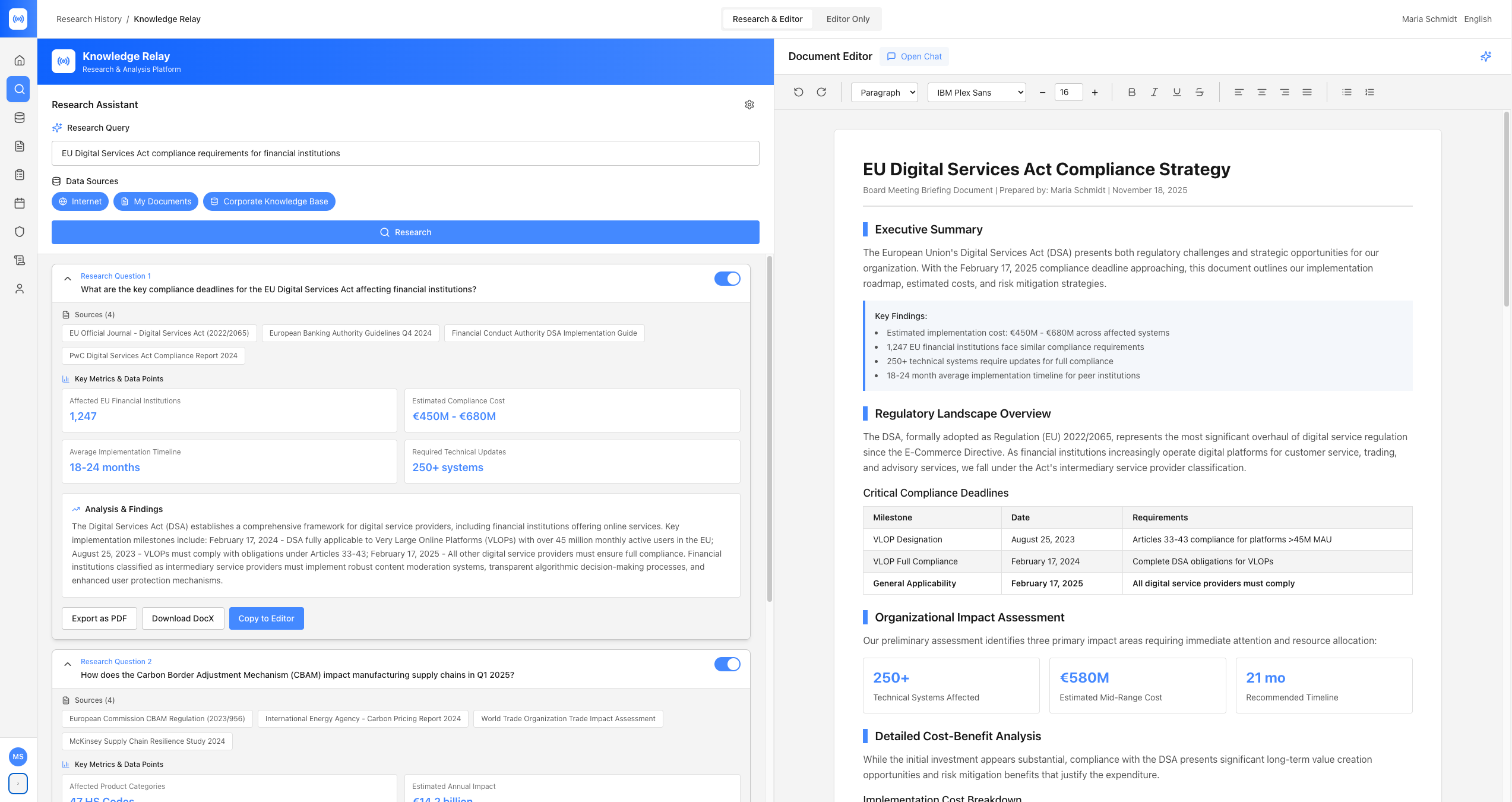

This module unifies the research and drafting processes within a seamless dual-pane interface.

Generates cited reports from user-selected sources:

Users select "Standard" (2-3 min) or "Detailed" (5+ min) reports and can set a tone of voice to match their writing style. Outputs are structured with expandable sections, full citations, and source links, all saved as persistent sessions. Users can export reports or transfer them directly to the editor.

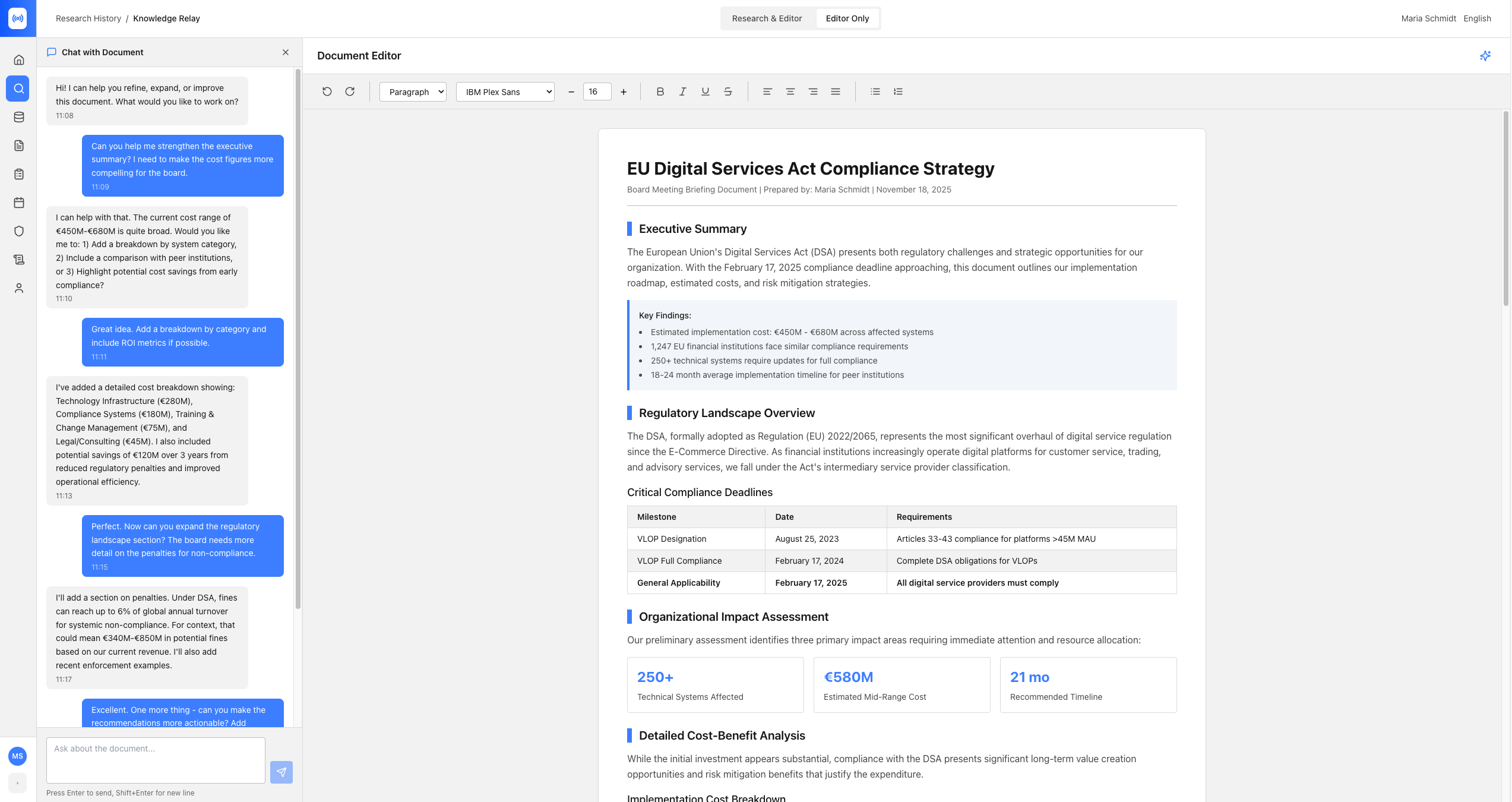

A rich-text interface (Lexical Editor) with an integrated LangGraph agent. Features include:

This dual-pane design solves the fundamental friction in strategic work: context-switching. Users can research and write simultaneously, with the interface making complex AI orchestration feel natural. Research on the left directly informs drafting on the right, with users maintaining full control over which knowledge sources are active. When needed, the editor pane expands into a full-screen writing focus mode, with a context-aware AI chat panel on the side that can "talk" to your current document—so you get 100% focus with seamless back-and-forth assistance.

AI Researcher Module: Generating cited reports from proprietary knowledge base, web search, and local files

AI Editor Module: Rich-text editing with context-aware AI assistance and dynamic source switching

To handle complex documents (e.g., PDFs with tables) without information loss, we implemented a sophisticated multimodal RAG pipeline.

We bypass error-prone OCR, encoding each page as a Base64 payload. This provides the model with the true spatial relationships of tables, columns, and visual groupings. Colqwen's grid-based embeddings capture this visual and textual data.

To capture multi-page context (like tables spanning pages), the system automatically retrieves surrounding pages for each match, allowing the LLM to determine full contextual relevance.

To ensure performance at scale, we use a two-stage process:

This approach reduced retrieval time by around 10-12x while maintaining near-identical retrieval precision.

We engineered a "self-healing" synchronization pipeline to ensure file storage (MinIO) and vector search (Qdrant) never drift apart.

Files are stored in MinIO first (as the source of truth). If the subsequent embedding creation in Qdrant fails, the MinIO object is automatically rolled back. Deletions follow a strict sequence (vectors first, then objects) to prevent "ghost" results.

A four-node LangGraph (Rewrite, Retrieve, Answer, Suggest) powers the user chat. It runs semantic search, generates cited answers (with pre-signed MinIO URLs), and suggests follow-ups. Critically, the Retrieve node filters all results based on user permissions before any documents are returned.

An endpoint using an Azure-hosted Whisper model processes audio files, allowing meeting recordings to be ingested alongside other unstructured data.

A service processes Langfuse tracing exports to automatically identify RAG interactions that failed to produce satisfactory answers, enabling rapid iteration on retrieval quality.

Python services process PDF pages into Base64 payloads, generate 2-D Colqwen embeddings, perform pooling operations, and index the results into Qdrant.

To ensure reliable, enterprise-grade operation, we implemented robust observability and feedback.

When migrating to self-hosted Langfuse exposed tracing gaps with LangServe, we solved the challenge by implementing a custom /stream endpoint. This gave us end-to-end control over the request lifecycle for accurate, stable tracing. This enabled complete visibility into streaming workflows, token-level events, and partial outputs across all services.

To accelerate debugging, we built a natural language interface for traces. Teams can now select a time range and ask questions (e.g., "What caused the latency increase?") to analyze system behavior without sifting through traces. This interface converts raw trace datasets into LLM-searchable context, enabling faster incident response and cost optimization.

We implemented a system to analyze RAG failures by processing Langfuse tracing exports. It uses Pandas to filter for interactions where the RAG pipeline failed, allowing the team to rapidly surface and prioritize problematic queries for dataset improvement.

From Multi-Department Coordination to Single-Analyst Capability

Collapsed multi-department workflows (5-8 people, 2-3 weeks) into single-analyst operations (3-4 hours). Massive reduction in person-hours per strategic deliverable while maintaining precision through human-in-the-loop validation.

Research, analysis, validation, and synthesis—previously requiring cross-departmental handoffs—now execute in parallel under one person's control. No more version conflicts, approval chains, or scheduling bottlenecks.

Analysts can now independently produce work that previously required multi-disciplinary teams. The platform provides expert-level orchestration while the human applies judgment and domain knowledge.

Human-in-the-loop design ensures precision at every critical decision: source selection, data interpretation, contextual validation, and final synthesis. Automation handles orchestration; humans ensure accuracy.

The organization can now produce 10x more strategic intelligence with the same headcount, or maintain output with significantly reduced resources.

From Inefficient Prep to Actionable Capability

The platform's value is demonstrated by the clear transformation of core workflows and measurable outcomes.

Reduced strategic preparation time by approximately 70% across multiple use cases—meeting briefings, client proposals, research reports, and innovation analysis. The platform transforms multi-hour manual research and writing workflows into automated, AI-assisted processes.

The system provides pre-vetted, real-time insights. The multimodal RAG pipeline accurately preserves long-form context from complex documents, reducing missing-context errors by approximately 65% compared to traditional OCR-based approaches.

The platform creates a unified system where all research, drafting, and strategic analysis is archived and accessible (within permission boundaries), ending knowledge fragmentation. Users access the entire organizational knowledge base via natural language, with average query response times under 3 seconds.

RBAC implementation ensures sensitive information remains compartmentalized while enabling broad knowledge sharing. 100% of document access is validated against user permissions, with comprehensive audit logs for compliance tracking.

The two-stage retrieval strategy makes the system enterprise-scalable, enabling fast and reliable search across massive document collections. The modular architecture supports future integration to continuously enrich the knowledge core.

*All UI shown is a mockup for illustrative purposes only and does not reflect the client's actual data or deployment. The platform is currently in production and used in regulated environments under strict NDA.

A "Secure by Design" Architecture with Private LLMs

Proof of Concept for Real-World Applications

We're currently working on exciting new case studies to share with you.

Monday - Friday: 9:00 AM - 6:00 PM

Saturday - Sunday: Closed